Key Takeaways

- • Transitioning from a prompt-based protocol to a general reasoning runtime enables the creation of a domain-agnostic cognitive engine.

- • A canonical reasoning state acts as a universal intermediate representation that normalizes diverse data types into a shared format for consistent processing.

- • Implementing protocol steps as system primitives within a three-layer architecture allows for more reliable and structured execution of complex reasoning tasks.

- • Defining reasoning as a series of observable state transitions makes AI workflows pausable, resumable, and transferable between agents.

Who this is for

AI engineers developing domain-agnostic reasoning runtimes and agent architectures

Where this fits

Parts 1-6 established 5PP as a working protocol, using the public canonical reference: Five-Point Protocol. Part 5 introduced SPINE as the structural layer. This part turns the corner: what changes when 5PP stops being a prompt scaffold and becomes a runtime engine inside SPINE.

The Upgrade

Parts 1 through 6 treated 5PP as a protocol - a set of steps that a human or agent follows when working on a task. Clarify, scope, plan, execute, verify. Applied manually. Applied in prompts. Applied through agent pipelines.

That is useful. But it is not the end.

The next step is to stop thinking of 5PP as a protocol wrapper and start treating it as a general reasoning runtime.

Instead of:

Input -> Apply five steps -> Answer

Move to:

Input -> Normalize -> Reason over shared model -> Choose tools -> Verify -> Persist

That is the jump from a useful prompt framework to a domain-agnostic cognitive engine.

The Missing Layer

Right now, every domain is different. An image has regions, labels, and OCR text. A repository has files, symbols, and dependencies. A note has headings, bullets, and claims. A business process has actors, steps, risks, and goals.

A reusable engine needs all of those converted into one common internal format.

This is the canonical reasoning state - the intermediate representation that makes "any input domain" real instead of aspirational.

Everything becomes:

entities, relations, goals, constraints, uncertainties, evidence, actions, verdicts

This shared model is where the five-point protocol runs. Not over raw text. Over structured state.

Three-Layer Architecture

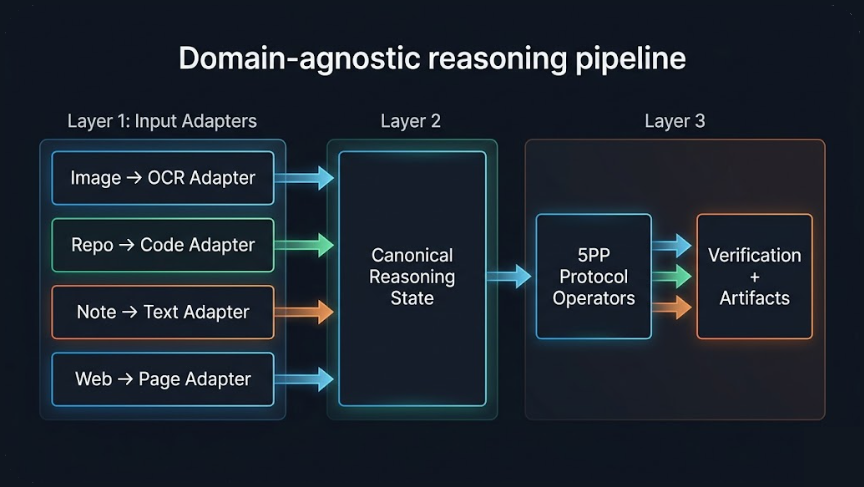

Domain-agnostic reasoning pipeline

The runtime has three layers:

Layer 1: Domain Adapters

Each input type gets an adapter that extracts structured objects. An image adapter produces OCR blocks and spatial relationships. A repo adapter produces files, functions, and imports. A note adapter produces claims, tasks, and entities. Their job is only to produce normalized data.

Layer 2: Canonical Reasoning Model

The heart of the system. Every adapter's output is converted into the same format - entities, relations, goals, constraints, evidence. This is the surface the protocol operates on.

Layer 3: Reasoning Operators

Each protocol step becomes a system primitive: Clarify maps raw input into objectives and assumptions. Scope produces boundaries and stop conditions. Plan generates ordered steps with dependencies. Execute calls tools - LLMs, retrievers, analyzers, search. Verify checks drift, unsupported claims, and confidence.

The protocol steps match directly - but now as system primitives instead of prompt text. In this series, SPINE is the project home of this runtime pattern.

The State Machine

Once the protocol operates on structured state, the engine becomes a state machine with observable transitions:

INGESTED -> NORMALIZED -> CLARIFIED -> SCOPED -> PLANNED -> EXECUTING -> VERIFYING -> COMPLETED

Each transition produces an external artifact. The state can also branch to FAILED or NEEDS_HUMAN at any point.

This makes the engine pausable, resumable, inspectable, and - crucially - handable to another agent mid-run.

Externalization Without Exposure

A critical design point: you externalize the contracts, not the mind.

The internal reasoning stays inside the engine. What the outside world sees is:

- Input contracts (task, constraints, success criteria)

- Normalized representations

- Step artifacts (clarification, scope, plan, execution log, verification report)

- Tool call events

- Memory write candidates

Raw chain-of-thought is not the interface. Structured reasoning artifacts are. This keeps the engine auditable without making it fragile.

The Decision Policy

Once the reasoning model exists, the next capability is a policy layer that decides:

- When to search the web

- When to ask another model

- When confidence is too low to continue

- When to escalate to human review

- When to store something in long-term memory

Without policy, the engine reasons. With policy, the engine behaves.

Policy is what separates a system that can think from a system that can be trusted.

The Shortest Formulation

Build a canonical internal reasoning state that all domains map into, then run the five-point protocol over that state instead of over raw input.

That is the turning point. After that, everything else becomes modular: adapters, tools, memory, recursion, multiple agents, learning loops.

Further reading in this series

- Previous: Part 6 - Beyond Content

- Next: Part 8 - The Recursive Engine

- 5PP origin: Part 1 - From Giant Meta-Prompt to 5PP

- SPINE as structural layer: Part 5

- Public canonical 5PP reference: Five-Point Protocol

This article is Part 7 of the From Meta-Prompt to Asset Factory series on Adaptivearts.ai.

Previously: Beyond Content: Research Loops, Autoresearch, and Embodied Agents - the same machinery applied to research and physical systems. Next: The Recursive Engine - how the protocol becomes self-scaling.