Key Takeaways

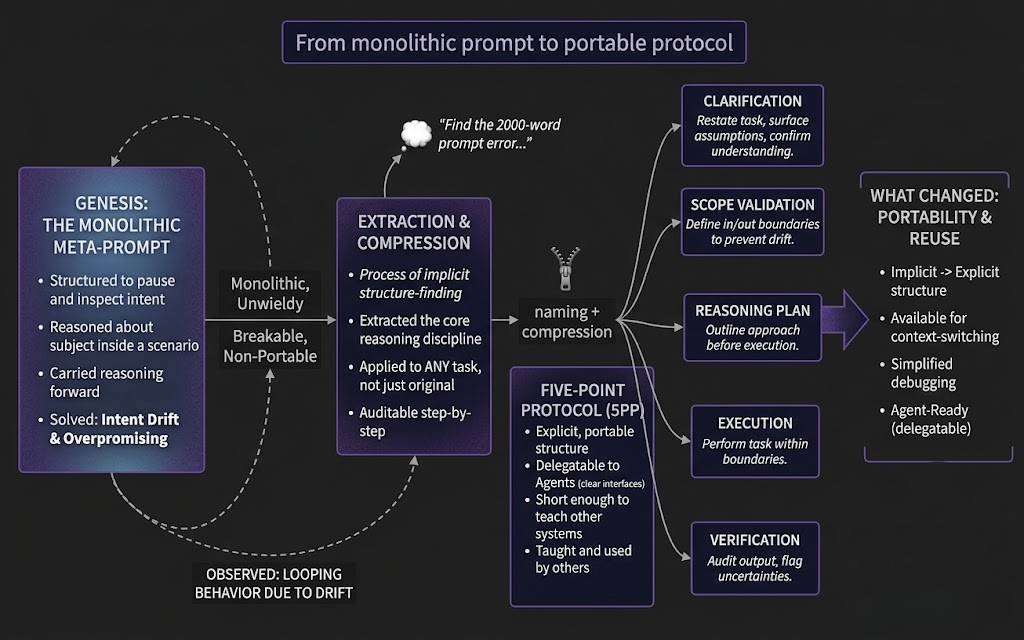

- • Transitioning from monolithic meta-prompts to modular protocols makes AI reasoning portable, auditable, and delegatable to agents.

- • The Five-Point Protocol improves AI reliability by enforcing explicit steps for clarification, scope validation, planning, execution, and verification.

- • Replacing implicit structures within large prompts with explicit frameworks prevents reasoning drift and allows for easier debugging across diverse contexts.

- • Modular protocols allow high-level reasoning discipline to be applied to any task and shared across different AI systems without extensive rewriting.

Who this is for

Prompt engineers seeking to transform monolithic AI prompts into structured reasoning protocols

The Genesis

A couple of years ago, while building an Obsidian plugin, something emerged that was not planned.

The goal was straightforward: get an AI system to do more than respond to isolated instructions. Instead of answering a single question, the prompt was structured to make the system pause, inspect intent, reason about the subject inside a scenario, and carry that reasoning structure forward into the next scenario.

The result was large. Very large.

A single meta-prompt that tried to contain everything: alignment, scope control, reasoning transparency, correction mechanisms, and transfer logic. It worked - sometimes impressively so - but it was unwieldy. It could not be shared, delegated, or applied to a different context without rewriting significant portions.

It was, in effect, a monolith.

What It Actually Did

Despite its size, the genesis meta-prompt solved a real problem. It forced AI systems to operate differently:

- Intent inspection - the system had to identify what the user actually wanted, not just what they said

- Scenario reasoning - the system had to think about the subject within its context, not in isolation

- Transfer - the reasoning structure could carry over to the next task without starting from zero

- Self-correction - built-in audit steps caught drift and overpromising before output

In my own interaction history, this was part of the kind of prompting territory where I observed looping behavior. The point was not to trick the model, but to expose how reasoning and intent could drift when they were not bounded clearly enough.

That moment made something clear: the prompt was doing more than generating answers. It was imposing a reasoning discipline.

The Problem with Monoliths

A monolithic prompt has obvious limitations:

- It cannot be shared without extensive explanation

- It cannot be delegated to agents - they need structured interfaces, not walls of text

- It breaks when applied to contexts it was not designed for

- It is difficult to debug - when something goes wrong, finding the cause in a 2000-word prompt is slow

The structure was sound. The packaging was not.

Compression, Not Invention

What followed was not the creation of something new. It was compression.

The implicit structure inside the meta-prompt was extracted, named, and reduced to five explicit steps:

- Clarification - restate the task, surface assumptions, confirm understanding

- Scope Validation - define what is in scope and what is not, to prevent drift

- Reasoning Plan - outline the approach before executing, allowing early correction

- Execution - perform the task within the established boundaries

- Verification - audit the output against the original intent, flag uncertainties

This became the Five-Point Protocol (5PP).

The protocol did not add new capabilities. It made the existing ones portable.

From monolithic prompt to portable protocol

What Changed

With 5PP, the same reasoning discipline could now be:

- Applied to any task - not just the ones the meta-prompt was designed for

- Delegated to agents - each step is a clear interface

- Taught to other systems - the protocol is short enough to include in any context

- Audited - each step produces visible output that can be reviewed

The transition was not from "bad prompt" to "good protocol." It was from implicit structure to explicit structure. The reasoning was always there. What changed was the ability to reuse it.

On Speed

It is easy to assume this took a long time.

It did not.

The genesis meta-prompt appeared during an Obsidian plugin build. The compression into 5PP happened shortly after. The effective time from first idea to usable protocol was measured in hours, not months.

The structure was powerful from the start. What changed was the ability to use it.

This article is Part 1 of the From Meta-Prompt to Asset Factory series on Adaptivearts.ai.

Next: The First Proof: Using 5PP to Align a Newsletter - how the protocol held under real working conditions.