Key Takeaways

- • Recursive AI systems must prioritize containing and absorbing volatility through multi-layered control mechanisms rather than attempting to eliminate it entirely.

- • Implementing confidence gating and circuit breakers prevents weak signals and errors from propagating through abstraction layers and compromising the system's integrity.

- • Deliberate operational friction, such as increasing verification steps and reducing recursion depth, is a vital stability feature when a system detects high uncertainty or output divergence.

- • Robust AI architectures should function as control systems that utilize redundant reasoning and temporal stabilization to ensure insights remain consistent before being promoted.

Who this is for

AI engineers designing resilient recursive systems and autonomous agents.

The Real Constraint

Recursion gives the system power. But power without stability produces a different outcome than intended:

Not a robust cognition system - but a chaotic recursive failure generator.

The question is no longer "can this scale and recurse?" but "can it remain stable when the ground shifts?"

Five Types of Volatility

The system faces volatility from multiple sources simultaneously:

Input Volatility

Changing data, noisy signals, incomplete information, adversarial inputs

Execution Volatility

Tool and API failures, latency spikes, partial results

Model Volatility

LLM drift, inconsistent outputs, stochastic reasoning

System Volatility

Node failures, distributed timing issues, message loss

Recursive Volatility

Errors amplified across levels, bad abstractions contaminating higher layers - the most dangerous kind

The core principle:

You do not eliminate volatility. You contain, absorb, and regulate it.

Five Control Layers

1. Circuit Breakers

Every recursion step must be able to fail safely. If confidence drops below threshold, the step halts and requests more data or human review rather than propagating uncertainty upward.

2. Confidence Gating

The most important mechanism. Promotion between abstraction levels is gated by confidence:

- High confidence (>= 0.8) - promote to next level

- Medium confidence (0.5-0.8) - allow local refinement only

- Low confidence (< 0.5) - reject, do not promote

This prevents weak signals from climbing abstraction layers and becoming "truth."

3. Redundant Reasoning

Instead of one engine producing one result, run multiple evaluations and aggregate. Consensus across parallel runs is a strong signal. Divergence is a volatility indicator.

4. Temporal Stabilization

Volatile systems fluctuate. So require persistence over time - no single-pass promotion. An insight must remain consistent across multiple runs before it advances.

5. Layer Isolation

Each abstraction level acts as a shock absorber. If Level 1 is chaotic, Level 2 receives only filtered, aggregated, validated outputs. Volatility is dampened upward, not amplified.

Friction as a Feature

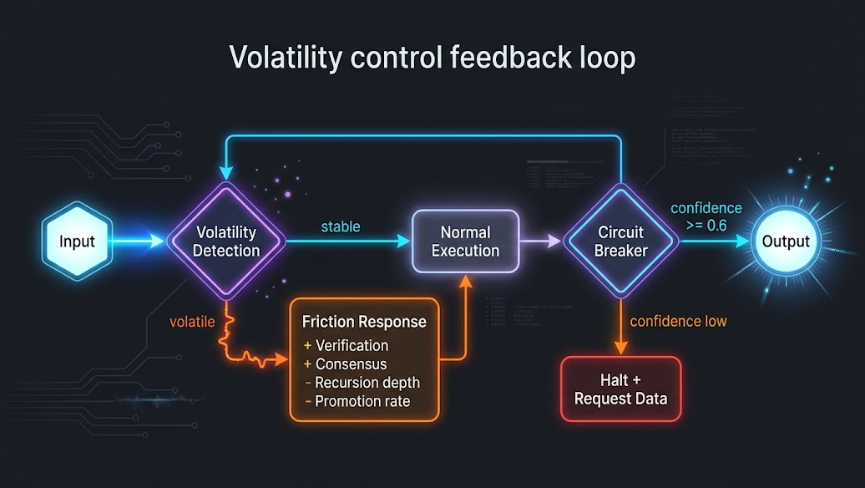

Volatility control feedback loop

Healthy systems slow down under uncertainty. This is deliberate.

When volatility is detected:

- Increase verification steps

- Require consensus before promotion

- Reduce recursion depth

- Limit promotion to higher levels

This is not a bug in the system's speed. It is a feature of its stability.

Volatility Detection Signals

The system needs to recognize when conditions are degrading:

Output divergence - multiple runs producing different answers

Low confidence clustering - many items falling below threshold

Contradictions - A and not-A both appearing in results

Tool instability - API failures, inconsistent responses

Recursive amplification - higher levels becoming less stable than lower ones

Each signal triggers different friction responses. The system adapts its own rigor to match conditions.

The Autopilot Analogy

The right mental model is not a brain. It is an autopilot system in turbulent air.

It needs:

Sensors - inputs and volatility signals

Filters - normalization and noise reduction

Controllers - the five-point protocol

Stabilizers - confidence gates and consensus

Fail-safes - circuit breakers and human escalation

The system must behave like a control system, not just a reasoning system. Feedback loops, damping, thresholds, stabilization, error correction.

The Five Non-Negotiable Rules

If you implement nothing else for stability, implement these:

- No promotion without confidence threshold

- No abstraction without consensus or consistency

- No recursion if instability detected

- Every step must be verifiable

- Every output must be discardable

The distilled principle:

Introduce stabilization layers - confidence gating, redundancy, temporal validation, and circuit breakers - so that uncertainty is absorbed and filtered at each recursion level rather than amplified.

This article is Part 9 of the From Meta-Prompt to Asset Factory series on Adaptivearts.ai.

Previously: The Recursive Engine - how the protocol becomes self-scaling. Next: Toward Production-Grade Reasoning - the seven dimensions that separate prototype from production.