Key Takeaways

- • Category confusion occurs in technical writing when plausible implications are presented with the same level of confidence as direct, evidence-based findings.

- • The DIAL-4 framework prevents reasoning drift by forcing a structural distinction between four specific modes of claims: Deduction, Inference, Abatement, and Legitimation.

- • Deductive reasoning must remain strictly evidence-bound, reporting only what is directly supported by the source to avoid overstating confidence.

- • Inferences should be clearly identified as interpretive extensions of findings to prevent the conversion of mere possibility into perceived certainty.

- • Distinguishing between conceptual inferences and practical abatement claims helps clarify whether a finding suggests a theoretical meaning or a specific reduction in operational friction.

Who this is for

Technical writers and AI practitioners refining logical clarity in complex documentation

The Collapse

Consider a claim that appears in a technical article: "A context-stack architecture reduces prompt engineering effort."

Read quickly, it sounds like a conclusion. But what kind of conclusion? Is it directly supported by evidence? Is it a plausible inference from how the architecture works? Is it a statement about practical burden reduction? Or is it a verdict that has survived structured scrutiny?

Most writing - and most AI output - treats these as the same thing. A plausible implication is presented with the same confidence as a direct finding. A practical benefit is stated as if it were proof. A bounded verdict and an unbounded inference sit in the same paragraph with no signal that they carry different reasoning weight.

This is category confusion, and it is the most common reasoning failure that goes uncaught. Not because the claims are wrong, but because the reader cannot tell what kind of claim they are looking at.

DIAL-4 exists to force those apart.

DIAL-4: Four Modes, Four Burdens

DIAL-4 is a didactic reasoning framework. The name encodes the four modes: Deduction, Inference, Abatement, Legitimation. Each mode asks a different question, carries a different reasoning burden, and protects against a different failure. The distinctions grew out of watching how reasoning drifts when claim types are allowed to blur - and formalizing the separations that prevent that drift.

Deduction

Question: What can be responsibly concluded from the source?

Deduction extracts the strongest defensible conclusions from available material. It is evidence-bound. The reasoning burden is that every deductive claim must point to something present in the source - a finding, a measurement, a structural fact. "The context stack separates input into six named layers: global, character, command, constraints, context, input" is a deduction. The code exists. The layers are named. The claim is directly supported.

Failure it protects against: Overstating confidence. Smuggling inference into deduction - making something sound established when it is actually implied. This is the most common category error in technical writing: treating a reasonable interpretation as if it were a finding.

Contrast with Inference: Deduction says "the source supports this." Inference says "the source suggests this." The boundary between them is where most reasoning drift begins.

Inference

Question: What does this suggest or imply?

Inference extends beyond direct findings while staying tethered to them. It is where meaning is drawn, where broader implications are identified, where forward-looking interpretation happens. "This layer separation likely reduces prompt rewriting across projects" is an inference. The architecture exists (deduction), and the inference is that reuse follows from separation. Plausible, reasonable - but not directly established.

Failure it protects against: Turning possibility into certainty. An inference is always interpretive. It must acknowledge that it reaches beyond what the source directly says, even when the reach is short. The moment an inference is stated as a finding, it has silently changed category.

Contrast with Deduction: Deduction is bounded by what is present. Inference is bounded by what is reasonable. The evidence bar is different. Deduction requires "this is here." Inference requires "this follows from what is here."

Contrast with Abatement: Inference explains meaning. Abatement identifies practical impact. "This suggests layer reuse is possible" is inference. "This may reduce the time teams spend rebuilding prompts" is abatement. The first is conceptual. The second is operational.

Abatement

Question: What burden, difficulty, cost, dependency, or friction may be reduced?

Abatement identifies practical changes - things that become easier, cheaper, less constrained, or less uncertain if the claim holds. "Teams using this architecture may spend less time debugging prompt interactions" is an abatement claim. It speaks to practical impact, not to truth or meaning.

The name was chosen deliberately over "Reduction" because abatement carries a stronger sense of something being lessened or relieved - a burden lifted - rather than a simple numerical decrease. It sounds more framework-like, but the meaning is precise: what becomes less hard?

Failure it protects against: Treating reduced friction as proof of feasibility or maturity. This is where business cases go wrong. Something becoming easier does not mean it works. Something reducing cost does not mean it is ready. Abatement claims are inherently softer than deductions - they describe what may change, not what has been established.

The governing principle: Utility is not proof. A practical benefit, no matter how compelling, does not validate the underlying claim. It is a separate kind of knowledge.

Contrast with Inference: Inference draws meaning. Abatement identifies operational impact. They often coexist - the same source may support both an inference ("this suggests reuse is possible") and an abatement ("this may reduce rebuild time") - but they are different claims carrying different weight.

Legitimation

Question: What survives explicit scope control, method discipline, and verification?

Legitimation filters the other three modes through a formal audit layer. It is where claims are tested against boundaries: what was in scope? What was actually confirmed versus inferred versus uncertain? What must be explicitly marked as out of scope?

The name was chosen over "Verification" because legitimation captures something broader than checking correctness. It asks: what remains valid after structured scrutiny? A claim can be technically correct but out of scope. A finding can be supported but overgeneralized. Legitimation is the mode that catches these - not by adding new evidence, but by enforcing discipline on what the other three modes produced.

Applied to the running example: "The context-stack architecture reduces prompt engineering effort" becomes, under legitimation: "The architecture reduces one specific kind of effort - layer-level reuse across projects. It does not reduce domain-specific prompt tuning, which remains per-project work. The reuse claim is supported for the six-layer structure as implemented. It has not been tested across teams or at scale."

Failure it protects against: Confusing formal structure with actual rigor. A legitimation pass that merely restates conclusions in cautious language is not legitimation - it is decoration. Real legitimation identifies boundaries, marks what was not established, and constrains the scope of what the other three modes can claim.

The governing principle: Verification bounds meaning. Legitimation exists to constrain drift, hype, and overreach. It is the mode that prevents an article from saying more than its evidence supports.

DIAL-4+: How Strong Is the Claim?

Knowing the claim type is necessary but insufficient. Consider two deductions:

- "The context stack has six named layers" - strong deduction. The code exists. The layers are enumerable.

- "The context stack enforces layer isolation at runtime" - weak deduction. There is structural separation, but whether enforcement is strict depends on configuration. The evidence is partial.

Both are deductions. Both say "the source supports this." But they carry different weight, and a reader who treats them identically will overestimate the second.

DIAL-4+ adds strength gradation within each mode. Instead of a binary "this is a deduction," the claim also carries a signal about how well-supported it is within its type. The axes are:

Confidence: How certain is the claim within its mode? A strong inference based on multiple converging signals differs from a speculative inference based on one analogy.

Evidence quality: What kind of evidence backs the claim? Implementation code is stronger than design documents. Measured benchmarks are stronger than architectural arguments. Observed behavior is stronger than projected behavior.

Scope: How broadly does the claim apply? "This works in the tested configuration" is narrower than "this works generally." Both may be valid deductions, but the scope changes what you can do with them downstream.

This matters because downstream handling changes with strength. A strong deduction can anchor a reference document. A weak deduction needs a caveat. A strong inference can motivate a design decision. A weak inference should be tagged as speculative and revisited when more evidence arrives. Without strength gradation, all claims of the same type look equal - and the reader has no basis for deciding which ones to trust more.

Evidence before implication. Within each mode, the stronger claims come first. The weaker ones are explicitly marked as weaker. This is not bureaucracy - it is how you prevent a chain of reasoning from quietly weakening as it extends. And it is what makes the next step possible: once claims are typed and strength-graded, a reasoning discipline like 5PP can govern what work follows from each one differently.

The Document Surface

Claim types and strength gradation govern what is being said and how strongly. But there is a third question: where does the claim live?

A deduction about the context stack's six layers belongs in a reference document - exact, structured, lookup-oriented. An explanation of why layer separation matters for reuse belongs in a different kind of document - interpretive, contextual, reasoning-oriented. A walkthrough of how to configure the stack belongs in a how-to guide. A guided first-time setup belongs in a tutorial.

This is the Diataxis arrangement: four document forms (tutorial, how-to, reference, explanation), each serving a different reader need (learn, do, look up, understand). The key insight for DIAL-4 is not Diataxis itself - it is that document form shapes how claims are received. A deduction placed inside a long explanatory essay loses its precision. An inference placed inside a reference table looks like a fact. The form of the document influences whether the reader correctly identifies the claim type.

The anti-mixing rule follows directly: a note weakens when it collapses multiple document forms without clear boundaries. When a tutorial embeds reference tables, when an explanation buries how-to steps in its middle, when a reference document editorializes - the claim types blur because the document surface no longer signals what kind of knowledge the reader is encountering.

For the running example: the deduction ("six named layers") lives in a reference note. The inference ("likely reduces rewriting") lives in an explanation. The abatement ("may reduce debugging time") lives in a how-to context where teams are evaluating whether to adopt the architecture. The legitimation ("reduces layer-level reuse effort specifically, not domain tuning") lives in an explanation that sets boundaries. Each claim finds its natural surface - and the reader can tell what kind of knowledge they are looking at partly because of where it lives.

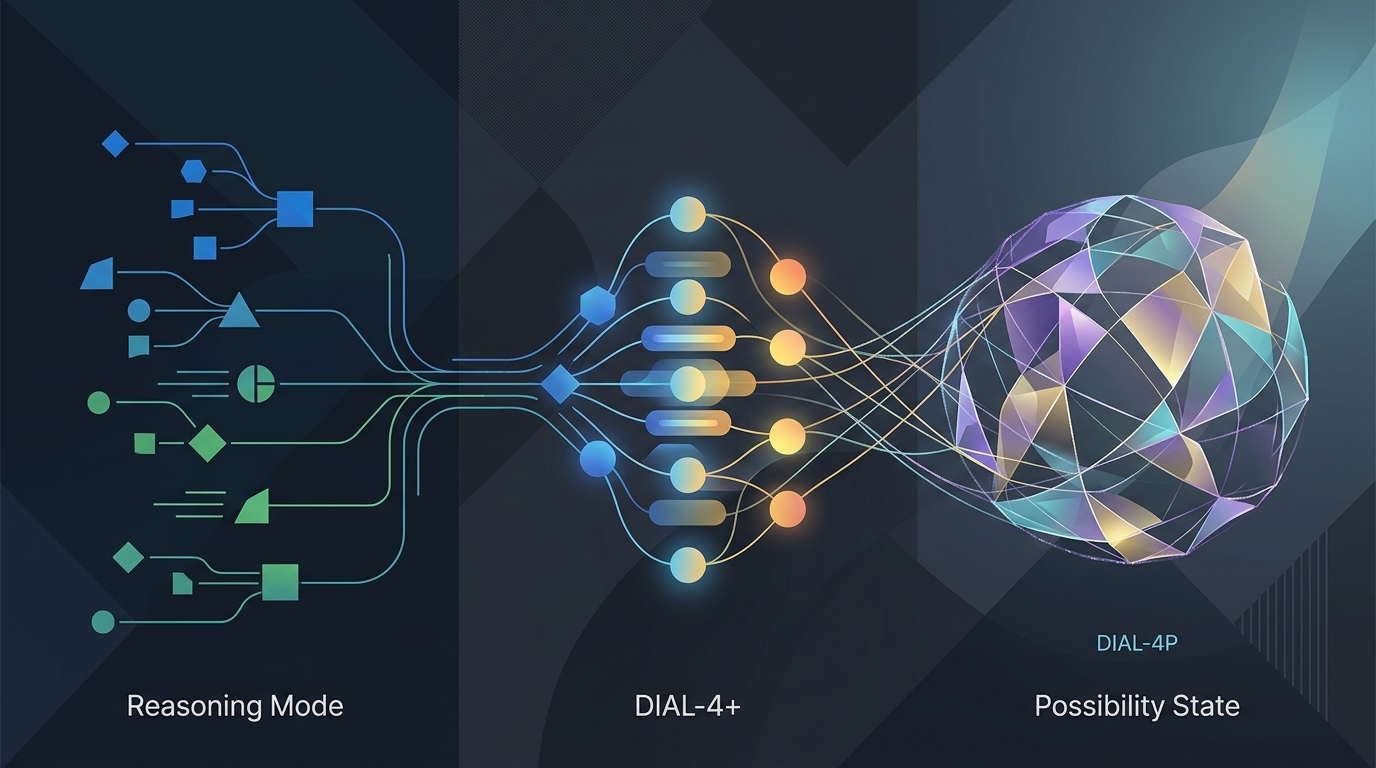

DIAL-4P: The Composed Stack

DIAL-4P composes the three layers into a single operational framework. The P stands for Possibility.

DIAL-4 governs claim type: deduction, inference, abatement, legitimation. It answers "what kind of claim is this?"

DIAL-4+ governs claim strength: confidence, evidence quality, scope. It answers "how strong is this claim within its type?"

Diataxis governs document form: tutorial, how-to, reference, explanation. It answers "where should this claim be expressed?"

5PP possibility governance governs the operating stance. It answers "what do we do with claims that are not yet resolved?"

5PP is a five-step reasoning discipline - Clarify, Scope, Plan, Execute, Verify - that was designed to prevent work from drifting mid-task. Inside DIAL-4P, it contributes something specific: after claims have been typed and graded, 5PP governs what happens next. Clarify surfaces which claims need more evidence. Scope prevents the analysis from widening past its boundary. Plan sequences the work so that deductions are established before inferences build on them. Execute produces artifacts within the scoped boundary. Verify catches premature collapse - the moment when a plausible inference is quietly treated as confirmed.

The core design principle comes from that Verify step:

Do not collapse possibility unless contradiction, explicit constraint, or hard blocker forces collapse.

This changes how knowledge behaves. Instead of binary verdicts - yes/no, valid/invalid, ready/not ready - the system preserves graduated status:

- confirmed - deduction holds, evidence strong

- plausible - inference reasonable, evidence partial

- emerging - pattern visible, not yet stable

- buildable with custom engineering - possible but requires non-trivial work

- blocked by current tooling - not possible without dependency change

- not yet evidenced - no basis for claim in either direction

Applied to the running example under full DIAL-4P governance:

The deduction ("six named layers exist") is confirmed. The inference ("likely reduces rewriting") is plausible - reasonable but untested at scale. The abatement ("may reduce debugging time") is emerging - observed in one environment, not measured. The legitimation boundary ("reduces layer-level reuse, not domain tuning") is confirmed as a scope constraint.

Without DIAL-4P, the original claim - "a context-stack architecture reduces prompt engineering effort" - would be published as a single flat assertion. With DIAL-4P, it becomes four typed claims at three different strength levels, expressed in appropriate document forms, with possibility status that tells the reader exactly what is established, what is plausible, and what remains open.

The operating stance is not caution for its own sake. It is precision about what you know, how strongly you know it, and what remains possible but unresolved. That distinction is what prevents reasoning from quietly collapsing into something simpler - and less honest - than the evidence supports.

Before and After

Without DIAL-4P:

A context-stack architecture reduces prompt engineering effort. The six-layer separation enables reuse across projects. Teams spend less time debugging prompt interactions. The architecture is proven and effective.

Four different claim types, presented at uniform confidence, in a single undifferentiated paragraph. The reader cannot tell which parts are established, which are inferred, and which are aspirational.

With DIAL-4P:

The same material, governed:

- Deduction (confirmed): The architecture separates context into six named layers: global, character, command, constraints, context, input.

- Inference (plausible): This separation likely enables prompt reuse across projects, reducing rewriting effort.

- Abatement (emerging): Teams may spend less time debugging cross-layer prompt interactions, though this has not been measured.

- Legitimation (confirmed scope): The reuse benefit applies to layer-level structure. Domain-specific prompt tuning remains per-project work and is not reduced by the architecture.

And the 5PP discipline determines what follows: the confirmed deduction can be referenced without caveat. The plausible inference enters the next cycle with a scoped investigation plan. The emerging abatement is tagged for measurement, not repeated as fact. The legitimation boundary is installed as a constraint that future work must respect. Each claim type gets a different operational next step - not just a different label.

The claims are the same. The honesty is different. And the work that follows is governed, not improvised - because 5PP gives each claim type a disciplined next step instead of a uniform confidence it did not earn.

This article is a companion to the From Meta-Prompt to Asset Factory series on Adaptivearts.ai.

Related: From Protocol to Runtime - where 5PP stops being a prompt scaffold and becomes a runtime engine. From Giant Meta-Prompt to 5PP - where the series begins.