Key Takeaways

- • Formalizing a skill discovery sub-step within the planning phase transforms cognitive workflows from manual outlines into dynamically compiled execution programs.

- • Implementing Level 3 integration allows skills to function as composable units that assemble into Directed Acyclic Graphs based on standardized input/output contracts.

- • Creating a formal decision boundary between reasoning and capability enables a system to explicitly query its registry rather than relying on implicit assumptions of available tools.

- • A self-improving feedback loop based on execution data refines the skill registry, optimizing for relevance and success rate in subsequent task compositions.

Who this is for

AI system architects designing autonomous agent frameworks and structured workflows

The Gap in Step 3

5PP structures how you think. Skills structure what you do. But there is a gap between them.

When the protocol reaches Step 3 (Plan), it relies on implicit knowledge of available tools. There is no systematic "which skills apply to this task?" decision point. The planner just... knows. Or guesses. Or misses things.

The fix is to add a skill discovery sub-step inside the planning phase - turning Step 3 from "outline an approach" into "compile an execution plan from available capabilities."

The Upgraded Step 3

3a - Classify: What type of task is this? Research, audit, content, infrastructure, security...

3b - Query: Search the skill registry for matches - by trigger phrases, domain tags, input/output compatibility

3c - Select & Rank: Match skills to the task, score by relevance and past success rate, filter by constraints

3d - Compose: Build an execution graph by connecting skills based on their input/output contracts

This is no longer planning. This is compiling a program dynamically.

Three Levels of Integration

Level 1: Advisory

Step 3 includes a checklist item: "check if any skills match this task." Human or AI decides. Still prompt-centric.

Level 2: Mapped

Skills carry metadata - domains, triggers, compatible steps. Step 3 queries this registry systematically. This is where it becomes automatic.

Level 3: Composable

Skills declare inputs, outputs, and dependencies. 5PP builds an execution graph (DAG) from their contracts. Skills become program units. This is Skills 2.0 territory.

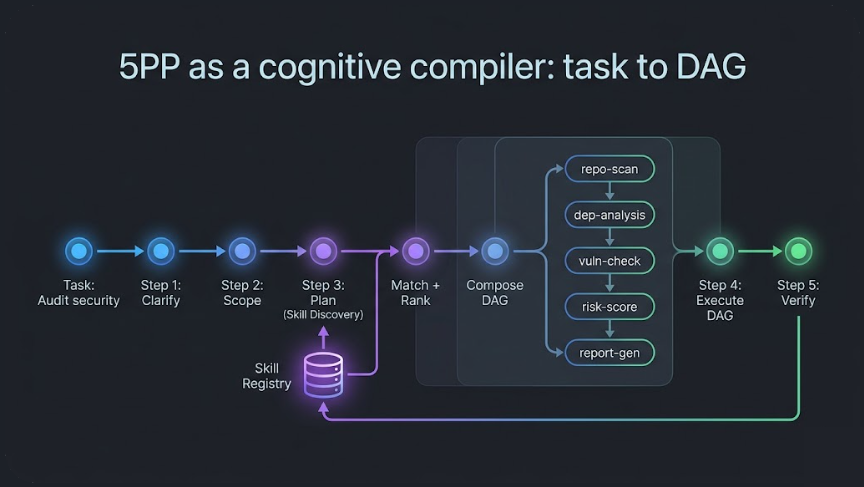

The DAG Compiler

At Level 3, Step 3 produces a Directed Acyclic Graph - a dependency-aware execution blueprint assembled from skill contracts.

Each skill declares what it needs and what it produces. The compiler connects outputs to inputs:

repo-scan -> dependency-analysis -> vulnerability-check -> risk-scoring -> report-generator

When skills have no dependencies on each other, they run in parallel. The same report-generator skill works across security audits, research pipelines, and analytics - reuse without hardcoding.

The Operating System Analogy

5PP as a cognitive compiler: task to DAG

What emerges is no longer a protocol system. It becomes something closer to an operating system:

| Component | OS Analogy |

|---|---|

| 5PP | Control loop (CPU) |

| Skills | Executable programs |

| Skill registry | Filesystem |

| Memory | Persistent storage |

| Evaluation | Learning mechanism |

| Composition | Compiler |

Self-Improvement Loop

With Skills 2.0, the compiled pipeline feeds back into itself:

Execution -> Evaluation -> Skill update -> Better execution next time

Skills are scored on relevance, success rate, and latency. Composition constraints prevent bad chains. Every run produces feedback that refines the registry.

But this creates risks: skill explosion (too many skills), bad composition (wrong chains), and drift (loss of coherence). The guards are skill typing with clear input/output contracts, composition constraints, and evaluation feedback on every run.

The Decision Boundary

The deepest insight in this evolution:

Before, reasoning implicitly knew what to do. Now, reasoning queries capability space explicitly.

This introduces a formal decision boundary between thinking and doing. The protocol decides what needs to happen. The skill registry reveals what can happen. The compiler connects the two into an executable plan.

The result: a system that can take any task and automatically build its own execution pipeline.

Which raises a question: when a system builds its own pipeline... what is "it"?

This article is Part 11 of the From Meta-Prompt to Asset Factory series on Adaptivearts.ai.

Previously: Toward Production-Grade Reasoning - the seven dimensions that separate prototype from production. Next: Run Context and Identity - what "it" is when a system builds its own pipeline.