Key Takeaways

- • Over-procedural agent systems underperform because they constrain capable reasoning to less capable workflows

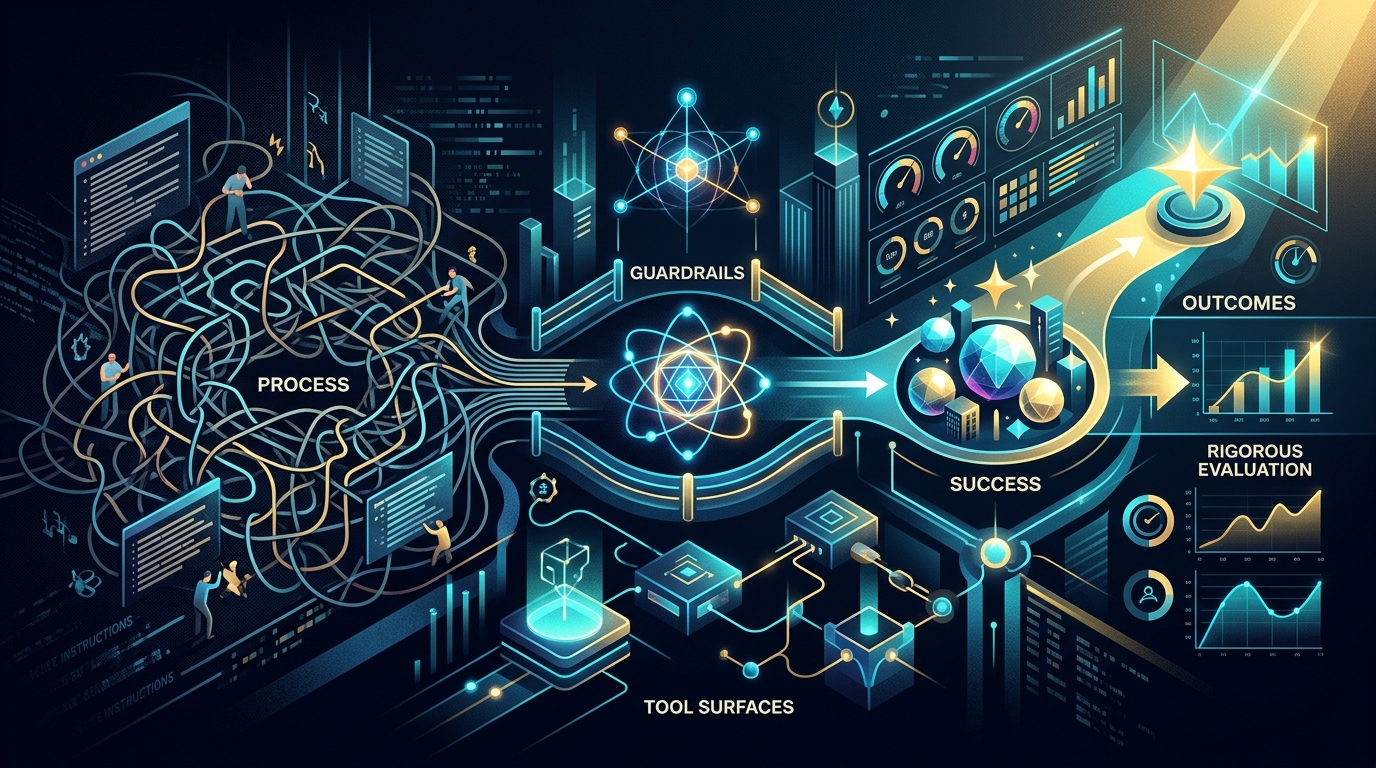

- • Four design surfaces replace process: outcome specification, guardrails, tool definitions, and evaluation

- • Agents should get more freedom in reasoning, not more freedom in boundaries

- • The human role shifts from step-by-step choreography to direction-setting and verification

Who this is for

Engineers and architects building or maintaining AI agent systems

Agentic Systems Need Less Process and Better Outcomes

Why the best agent architectures are defined by what they aim for, not what they follow

Summary

Building effective AI agents is not about writing longer instructions. It is about specifying clearer outcomes, defining stronger guardrails, presenting better tool surfaces, and measuring results with rigorous evaluation. The systems that perform best are the ones that give the agent freedom in how it reasons and works, while being precise about what must be true when it finishes.

Key Points

- Over-procedural agent systems underperform because they constrain capable reasoning to less capable workflows

- The four design surfaces that matter: outcome specification, guardrails, tool definitions, and evaluation

- Agents should get more freedom in reasoning, not more freedom in boundaries

- The human role shifts from step-by-step choreography to direction-setting and verification

The Problem with Procedural Agents

Most agent systems are built the same way. A system prompt describes the agent's role. A sequence of instructions tells the agent what to do first, second, third. Routing logic decides which path to follow. Checks are inserted at each stage. The result is a pipeline that works, but that also encodes a fixed workflow into every interaction.

This approach made sense when the underlying systems needed explicit guidance to produce consistent results. If the system could not reliably decide what to do next, you had to decide for it. If it could not assess its own output, you had to insert checkpoints.

But the systems are getting better at deciding what to do next. And the procedural pipelines are not getting better with them. They are staying fixed - encoding the limitations of the capability level they were designed for.

The result is a common pattern: an agent system that works, but works at a level well below what the system could achieve if it were given a clear goal, appropriate constraints, and the freedom to figure out the rest.

What Over-Scaffolded Agents Look Like

You can recognize an over-scaffolded agent system by its symptoms:

Rigid routing logic. The system classifies inputs into categories and routes each to a predetermined handler. The classification scheme was built for a specific set of use cases. New cases get forced into existing categories or fall through.

Prescribed execution sequences. The system follows a fixed order of operations regardless of whether that order is optimal for the specific task. Steps that are unnecessary for certain inputs still execute because the pipeline requires them.

Intermediate validation everywhere. Each stage has its own validation step, checking the output before passing it to the next stage. The checks add latency and complexity while catching fewer errors as the underlying system improves.

Hard-coded retrieval strategies. The system uses a fixed retrieval pipeline - specific embedding models, fixed chunk sizes, predetermined re-ranking logic - regardless of whether the system could achieve better results by managing its own context.

None of these are wrong in every case. But when they persist past the capability level that required them, they become overhead. The procedural scaffolding becomes a ceiling on what the agent system can accomplish.

The Four Surfaces That Replace Process

When you remove unnecessary process from an agent system, four design surfaces become primary. These are not new concepts. They are what remains after the subtraction.

1. Outcome specification

An outcome specification describes what success looks like. Not how to get there. Not what steps to follow. Just what must be true when the work is done.

A procedural instruction says: "First classify the customer's intent into one of 14 categories, then retrieve the top 5 knowledge base articles, then generate a response using only the retrieved context."

An outcome specification says: "Resolve the customer's issue using our knowledge base and policies. The customer should leave satisfied and the resolution should comply with our return policy."

The difference is not just stylistic. The outcome specification gives the system room to decide how to achieve the result. If the system can classify, retrieve, and respond more effectively through a different approach, the outcome specification allows that. The procedural instruction does not.

Writing good outcome specifications is a design craft. It requires understanding what success actually means in your domain - not in terms of process steps, but in terms of results.

2. Guardrails

When you remove procedural instructions, guardrails become the primary way you shape behavior. A guardrail defines what must not happen, regardless of how the system achieves the outcome.

"Never disclose customer financial data." "Always cite sources when referencing published research." "Responses must comply with accessibility guidelines."

These are durable. They survive capability improvements because they reflect your domain, your regulations, and your standards - not the system's limitations.

The key insight is that guardrails are not a safety tax bolted onto a procedural system. When the procedures are removed, guardrails become the creative design surface through which you define the system's behavior. Getting them right is more important than getting the procedure right, because they persist while procedures should evolve.

3. Tool definitions

An agent system is only as effective as the tools it can use. Tool definitions describe what each capability does, what inputs it expects, and what outputs it produces.

When a system is procedurally directed - "call Tool A, then Tool B, then Tool C" - the quality of the tool definitions matters less. The system is told what to call and when.

When the system decides what to call, tool definitions become critical. Each tool needs to be described precisely enough that the system can assess when to use it, how to combine it with other tools, and what to do with the result.

This is a design investment that pays for itself. Well-defined tools enable the system to solve problems you did not anticipate, because the system can compose tools in ways you did not prescribe.

4. Evaluation

When you give an agent more freedom in how it works, you need more rigor in how you verify the result. Evaluation is the counterweight to procedural flexibility.

The pattern that works: specify the outcome clearly, give the system freedom to achieve it, then evaluate the result comprehensively. A single evaluation gate at the end that tests everything - functional requirements, quality standards, compliance, edge cases - is simpler and more reliable than distributed checks at each stage.

Evaluation is also the mechanism that builds trust. When you can show that the system consistently produces results that pass rigorous evaluation, you can justify giving the system more freedom. When evaluation catches failures, you know where to add constraints - not more process, but better guardrails.

Why This Works Better

The argument for less process and better outcomes is not theoretical. It follows from a straightforward observation about how capable systems behave.

A system that is told exactly what to do will do exactly what it is told. If the instructions are good, the result is good. If the instructions encode limitations that no longer apply, the result is limited by those instructions.

A system that is given a clear outcome, appropriate constraints, and well-defined tools will find a way to achieve the outcome. If the system's reasoning ability has improved since the instructions were written, the result reflects that improvement. The instructions do not hold it back.

This is why procedural agent systems plateau. They work at the level they were designed for, regardless of how capable the underlying system becomes. The more capable the system, the greater the gap between what it could do and what the procedural pipeline allows it to do.

The Human Role After Subtraction

This shift changes what the human does in agent system design. Instead of scripting every step, the human defines four things:

- The goal. What does success look like? What must the system produce?

- The constraints. What must not happen? What standards must be met?

- The tools. What capabilities are available? How are they described?

- The proof. How do we know the system succeeded? What does the evaluation check?

This is a more demanding role than writing procedural instructions. Setting clear direction requires deeper understanding of the domain than specifying a sequence of steps. But it is also a more durable role, because it survives capability improvements without requiring rewrites.

Getting Started

If you build or maintain agent systems, start with this exercise:

- Pick one agent workflow. Write down every procedural instruction it follows.

- For each instruction, ask: does this describe an outcome, a constraint, or a process step?

- For the process steps, ask: could the system figure this out on its own if I just specified the outcome?

- Rewrite the workflow using only outcomes and constraints. Test it.

- Add evaluation that checks everything the process steps used to check.

You may find that the simpler version performs as well or better than the procedural version. If it does, the process steps were overhead. If it does not, examine which process steps actually mattered and convert those into constraints or tool improvements rather than restoring the full procedure.

Conclusion

The best agent systems are not the ones with the most detailed instructions. They are the ones with the clearest outcomes, the strongest guardrails, the best-defined tools, and the most rigorous evaluation.

As systems become more capable, the design discipline shifts. Less process. Better outcomes. Stronger constraints. Stricter proof.

This is the direction the Subtraction Discipline points toward: an approach to agent design where the human sets the direction and verifies the result, and the system handles everything in between.

This article is part of the Subtraction Discipline series on Adaptivearts.ai.

Previously: The Per-Line Audit - a 15-minute exercise for any system prompt. Framework: The Subtraction Discipline - the full methodology.