Key Takeaways

- • Three categories of system prompt content: outcome specifications, persistent constraints, and compensatory scaffolding

- • Context architecture replaces retrieval engineering as the primary design concern

- • Guardrails become creative design material when procedural scaffolding dissolves

- • Evaluation architecture consolidates toward comprehensive end-gates

- • The human role shifts from process management to direction-setting and quality judgment

Who this is for

AI practitioners, technical leads, and workflow designers who have accumulated significant prompt engineering infrastructure

The Subtraction Discipline: Designing AI Systems by Removing What You Don't Need

Why effective AI architectures are increasingly built by taking things away

Summary

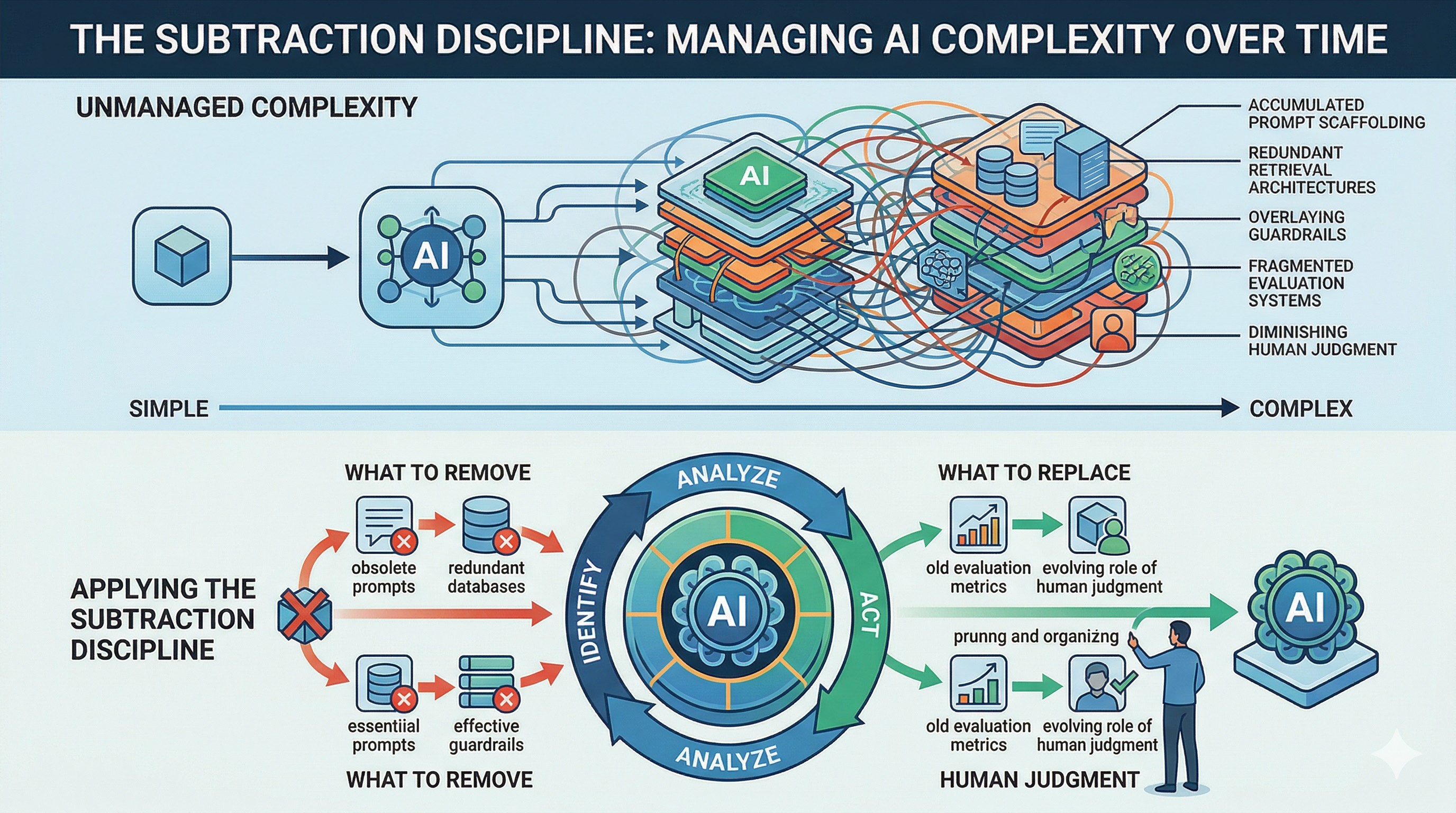

AI systems accumulate complexity the way buildings accumulate wiring. Each addition solved a real problem at the time. But as the capabilities underneath improve, much of that accumulated infrastructure stops helping and starts interfering. The Subtraction Discipline is a framework for identifying what to remove, what to keep, and what to replace with something better. It covers prompt scaffolding, retrieval architecture, guardrails, evaluation, and the evolving role of human judgment in AI system design.

Key Points

- Effective AI system design increasingly depends on removing unnecessary complexity, not adding more

- Three categories of system prompt content: outcome specifications, persistent constraints, and compensatory scaffolding

- Context architecture replaces retrieval engineering as the primary design concern

- Guardrails become creative design material when procedural scaffolding dissolves

- Evaluation architecture consolidates toward comprehensive end-gates

- The human role shifts from process management to direction-setting and quality judgment

The Accumulation Problem

A customer support system has a system prompt that has grown to 3,000 tokens over eighteen months. Half of it is procedural: classify the intent into one of fourteen categories, route to the appropriate handler, retrieve the top five knowledge base articles, check for hallucinated URLs, format the response according to the style guide. Each step was added because something went wrong without it.

Now remove the procedural half. Replace it with two sentences: "Resolve the customer's issue using our knowledge base, account history, and return policy. The customer should leave satisfied."

In a growing number of cases, the shorter version produces results that are as good or better. The system handles routing, retrieval, and formatting on its own. The eighteen months of accumulated procedure was not protecting quality. It was constraining it.

This is the accumulation problem. Most AI systems have a version of it. And once you see it, you start noticing it everywhere.

How it happens

Every AI system starts lean. A clear instruction, a focused purpose, a short prompt. Then reality arrives. A customer reports a confusing response, so you add a clarification. Output quality varies on edge cases, so you add procedural steps. A failure mode surfaces, so you add a check. A new use case appears, so you add routing logic. Each addition is reasonable. Each one solves the problem it was written for.

Over months, these additions compound. What started as a focused system prompt becomes a layered document of accumulated fixes, procedural instructions, and compensatory logic. Some of this complexity is necessary. Much of it is not. The difficulty is telling the difference.

This accumulation is not a sign of poor engineering. It is the natural consequence of building systems on top of capabilities that keep changing. An instruction that was essential six months ago may be addressing a limitation that no longer exists. A procedural sequence that once prevented failure may now be preventing the system from applying a better approach.

The problem is that additions are visible and deletions are risky. Every line in a system prompt exists because someone added it for a reason. Removing it feels like removing a safety net. So the system grows, and each new instruction interacts with the existing complexity in ways that become harder to predict.

The compounding cost of procedural debt

This complexity carries real costs. Longer prompts consume more tokens, which affects latency and expense. Procedural instructions constrain the system's behavior in ways that may no longer be beneficial. And accumulated complexity makes every future change harder, because each new instruction must account for all the existing ones.

But the deepest cost is subtler: over-specified systems cannot take advantage of improved capabilities. When a system prompt dictates a fixed procedure, the system follows that procedure regardless of whether it could achieve a better result through a different approach. The instructions become a ceiling.

Why "it works, don't touch it" is the most expensive decision

The most common response to accumulated complexity is to leave it alone. If the system is producing acceptable results, the reasoning goes, why risk breaking it by removing things?

This reasoning is expensive because it locks the system into its current performance level. Every capability improvement that could have benefited the system is blocked by instructions that enforce the old way of doing things. The system works, but it works at the level it was designed for, not the level it could operate at.

The Subtraction Test

The core of the Subtraction Discipline is a classification exercise. Every instruction in a system prompt falls into one of three categories:

Outcome specifications

These describe what the result should look like, what success means, or what the user needs. They tell the system what and why.

"Respond in the user's language." "The report should include both quantitative and qualitative assessment." "Resolve the customer's issue using our knowledge base and policies."

Outcome specifications are durable. They survive capability improvements because they describe your goals, not the system's limitations.

Persistent constraints

These define boundaries that should hold regardless of how capable the system becomes. They exist because of your domain, your regulations, your standards - not because of the system's current behavior.

"Never disclose customer financial data." "Always cite the source when referencing published research." "Responses must comply with our accessibility guidelines."

Persistent constraints are also durable. A more capable system still needs to know what it must not do and what standards it must meet.

Compensatory scaffolding

These tell the system how to do something because, when the instruction was written, the system could not figure out the right approach on its own.

"First classify the intent into one of 14 categories, then route to the appropriate handler." "Retrieve the top five knowledge base articles before generating a response." "Check your response for hallucinated URLs before returning it."

Compensatory scaffolding is your subtraction target. Each of these instructions encodes an assumption about what the system cannot do. When that assumption is no longer true, the instruction is no longer helping.

What survives subtraction

After classification, what remains is a system prompt built from two durable categories: what you want (outcomes) and what you require (constraints). The procedures are gone, replaced by the system's own judgment about how to achieve the specified outcomes within the specified constraints.

This is not a loss of control. It is a shift in the type of control. Instead of controlling how the system works, you control what it works toward and what it must not violate. This is a more resilient form of control, because it survives capability changes without requiring prompt updates.

Context Architecture Replaces Retrieval Engineering

When procedural instructions are removed, something must fill the gap. For retrieval and information access, that something is context architecture.

From retrieval pipelines to organized repositories

Traditional retrieval design focuses on building pipelines: query reformulation, embedding search, re-ranking, filtering, chunking strategies. These pipelines encode decisions about what the system should see and in what order.

As systems become more capable at managing their own context, the design focus shifts. Instead of engineering the retrieval pipeline, you design the information environment. The question changes from "how do I get the right information to the system?" to "how do I organize information so the system can find what it needs?"

The context window as a self-managed resource

Capable systems are increasingly effective at deciding what information they need, finding it in available sources, and assembling a useful context for the task at hand. The design implication is straightforward: spend less effort on retrieval logic and more effort on making your information well-organized, well-labeled, and searchable.

This means clean repository structures, consistent naming, good metadata, and clear documentation. These are not new ideas. But they become first-class design concerns when the system is responsible for its own retrieval.

What "well-organized" means in practice

A well-organized information environment has three properties:

- Discoverable. The system can find what exists without being told where to look.

- Labeled. Each document, section, or resource has enough context for the system to assess its relevance.

- Structured. Related information is grouped in ways that make sense for the tasks the system performs.

If your information environment has these properties, you can often replace complex retrieval pipelines with a simple instruction: "Use the knowledge base to resolve this issue." The system handles the rest.

Guardrails as the Primary Design Surface

When procedural scaffolding is removed, constraints and guardrails become the most important part of the system prompt. This is not a concession. It is a design opportunity. (For a broader treatment of trust and governance in AI systems, see Security-First AI.)

When process dissolves, boundaries remain

In a heavily procedural system, guardrails are one layer among many. They sit alongside routing logic, classification steps, and output formatting rules. When the procedural layers are removed, the guardrails become the primary way you shape the system's behavior.

This changes how you think about guardrails. They are no longer a compliance requirement bolted onto a procedural system. They are the design surface through which you define what the system should and should not do.

Business rules that persist vs. rules that compensate

Not every rule in a system prompt is a genuine guardrail. Some rules are constraints that reflect your domain, your legal requirements, or your quality standards. These persist regardless of system capability.

Other rules are compensatory: they exist because the system once had a tendency to do something undesirable, and the rule was added to prevent it. These are candidates for removal, because the tendency they compensate for may no longer exist.

The distinction matters. Genuine constraints should be clear, specific, and well-maintained. Compensatory rules should be tested and removed when they are no longer necessary.

The guardrail audit

To audit your guardrails:

- List every rule and constraint in your system prompt.

- Classify each as domain constraint (exists because of your business, regulations, or standards) or compensatory rule (exists because of a past system behavior).

- For each compensatory rule, test whether the behavior it prevents still occurs without the rule.

- Remove compensatory rules that are no longer necessary. Strengthen domain constraints that deserve more clarity.

The goal is a set of guardrails that is small, clear, and genuinely load-bearing. Every constraint should be there because your domain requires it, not because an earlier version of the system required it.

Evaluation Architecture

As AI systems produce more output across more domains, evaluation becomes a design discipline in its own right. The Subtraction Discipline has implications for how you structure evaluation.

Why intermediate checks can cost more than they save

Many AI pipelines include validation steps at each stage: check the classification, validate the retrieval, review the draft, then check the final output. Each check adds latency, cost, and complexity.

When the system's reliability at each stage is high, the value of intermediate checks decreases. A check that rarely catches errors while adding cost and latency to every request is a net negative. The subtraction principle applies: remove checks that no longer earn their place.

The single comprehensive gate pattern

An alternative to distributed intermediate checks is a single comprehensive evaluation gate at the end of the pipeline. This gate tests everything: functional requirements, non-functional requirements, edge cases, formatting, and compliance.

The advantages are significant. A single gate is simpler to maintain, easier to make comprehensive, and cheaper to run than multiple distributed checks. It also avoids the problem of intermediate checks creating false confidence - where each check passes individually but the overall output still has issues.

The key requirement is that the end-gate must be genuinely comprehensive. It must test everything the intermediate checks would have tested, plus anything they would have missed. This takes effort to build, but the result is a simpler, more reliable evaluation architecture.

Scaling evaluation beyond human review capacity

As AI systems produce more output, human review becomes a bottleneck. The per-item cost of human review does not decrease as volume increases. At some point, you need evaluation that scales with output volume.

This means investing in automated evaluation that you trust. Building that trust requires clear criteria, comprehensive test coverage, and a feedback loop that improves the evaluation over time. The goal is not to eliminate human judgment from evaluation, but to apply human judgment to the evaluation system rather than to each individual output.

The Human Role After Subtraction

The Subtraction Discipline changes what humans do in AI system design. The shift is from managing process to setting direction.

Direction-setting, not process management

When a system prompt is full of procedural instructions, the human's role is to maintain those procedures: update them, debug them, add new ones when problems arise. This is process management.

When the procedures are removed and replaced with outcomes and constraints, the human's role shifts to direction-setting: defining what the system should accomplish, specifying what it must not violate, and evaluating whether the results meet the standard.

This is a more demanding role, not a simpler one. Setting clear direction requires deeper understanding of the domain, the users, and the quality standards than maintaining a procedure does.

Quality judgment as a non-delegable skill

One consequence of more capable AI systems is that the bar for quality must rise. When output quality is consistently high, it becomes tempting to lower scrutiny. This is a mistake.

The human role in evaluation is not to catch obvious errors - systems should catch those on their own. The role is to maintain a high standard and to recognize the difference between output that is acceptable and output that is excellent. This judgment is difficult to automate precisely because it requires understanding what "excellent" means in a specific context.

Tool definition and outcome specification as design crafts

In a subtracted system, two artifacts become the primary design surfaces: the tool definitions that describe what the system can do, and the outcome specifications that describe what it should achieve.

Writing clear tool definitions means describing each capability precisely enough that the system can decide when and how to use it. Writing clear outcome specifications means describing success in terms that are specific, measurable, and domain-appropriate.

These are design crafts. They require iteration, testing, and domain expertise. They are also the highest-leverage activities in AI system design, because they shape every interaction without constraining the approach.

The Subtraction Audit

The following checklist applies the Subtraction Discipline to any AI system. It can be used as a one-time assessment or as a recurring review.

Prompt audit

- Read every line of every system prompt. Classify each as O (outcome), C (constraint), or S (scaffolding).

- For each S line: test removing it. Does output quality degrade? If not, remove permanently.

- For each S line that cannot be removed: can it be rewritten as an outcome specification?

- Count the ratio of O:C:S. Track this over time.

Context and retrieval audit

- List every retrieval pipeline step where you decide what the system sees.

- For each step: could the system make this decision if the information were well-organized?

- Assess your information environment: is it discoverable, labeled, and structured?

- Identify one retrieval pipeline step to remove and replace with better organization.

Guardrail audit

- List every rule and constraint. Classify as domain constraint or compensatory rule.

- Test each compensatory rule: does the prevented behavior still occur without the rule?

- Remove compensatory rules that pass the test. Strengthen domain constraints that deserve clarity.

Evaluation audit

- List every validation step in your pipeline. For each: what is the error catch rate?

- Identify intermediate checks with low catch rates relative to their cost.

- Design a single comprehensive end-gate that covers all validation requirements.

- Build a feedback loop that improves the end-gate over time.

Role audit

- For each human touchpoint in the system: is this process management or direction-setting?

- Identify process management tasks that could be eliminated by better outcome specifications.

- Assess your tool definitions: are they precise enough for the system to use without procedural guidance?

- Assess your outcome specifications: do they describe success without prescribing method?

Scoring

Count your total checklist items. Score each as done, in progress, or not started. A system with more than half its items in "done" has a strong subtraction posture. A system with most items in "not started" has significant subtraction opportunity.

When NOT to subtract

Subtraction is not always appropriate. Do not subtract:

- Safety-critical constraints. If a rule prevents harm, keep it regardless of whether the system seems to handle it without the rule. Safety margins exist for a reason.

- Regulatory requirements. Compliance rules persist because the regulation persists, not because the system needs the reminder.

- Instructions that encode hard-won domain knowledge. Some instructions capture insights that are genuinely difficult to infer from context. These are worth keeping if the system cannot reliably reconstruct the reasoning.

- Instructions you cannot test. If you cannot verify that removing an instruction does not degrade output, keep it until you can.

The discipline is not "remove everything." It is "remove what is no longer necessary and be rigorous about telling the difference."

Conclusion

AI systems do not fail because they lack instructions. They underperform because they carry too many instructions written for capabilities that no longer apply.

The Subtraction Discipline is a framework for keeping your systems current with the capabilities available to them. It starts with a simple classification - outcome, constraint, or scaffolding - and extends across retrieval, guardrails, evaluation, and the human role in system design.

The practice is straightforward:

- Audit your system prompts, retrieval pipelines, guardrails, and evaluation architecture.

- Classify each element as durable (outcomes and constraints) or compensatory (scaffolding).

- Test removing compensatory elements. Keep what is necessary. Remove what is not.

- Repeat on a regular cadence. Capability improvements do not stop, and neither should your subtraction practice.

If you want a place to start, try The Per-Line Audit - a 15-minute exercise you can apply to any system prompt today. Classify each line, test removing the scaffolding, and see what happens. Most people find the results surprising.

The Subtraction Discipline extends beyond prompts into context architecture, guardrail design, evaluation consolidation, and outcome-driven planning. Each of these dimensions has its own audit methodology and its own version of the same core question: is this here because the system needs it, or because I needed the system to need it?

Start with one prompt. Remove what does not earn its place. That is the discipline.

This is part of the Subtraction Discipline series on Adaptivearts.ai.