Key Takeaways

- • Before MCP, AI tool integration was fragmented, requiring custom middleware and unique schema definitions for each AI model, leading to significant development overhead and lack of portability.

- • The Model Context Protocol (MCP) standardizes AI tool integration, enabling tools to be defined once and used universally across any MCP-compatible AI model or client.

- • MCP simplifies development by automatically extracting tool definitions from code (type hints, docstrings) and handling all routing, eliminating manual schema creation and custom 'glue code'.

- • Adopting MCP allows developers to build reusable, standalone AI tool servers that can be connected to custom clients, significantly enhancing flexibility and model interoperability.

Who this is for

AI developers seeking standardized, portable solutions for tool integration.

The Evolution of AI Tool Integration: From Custom Glue to Universal Protocol

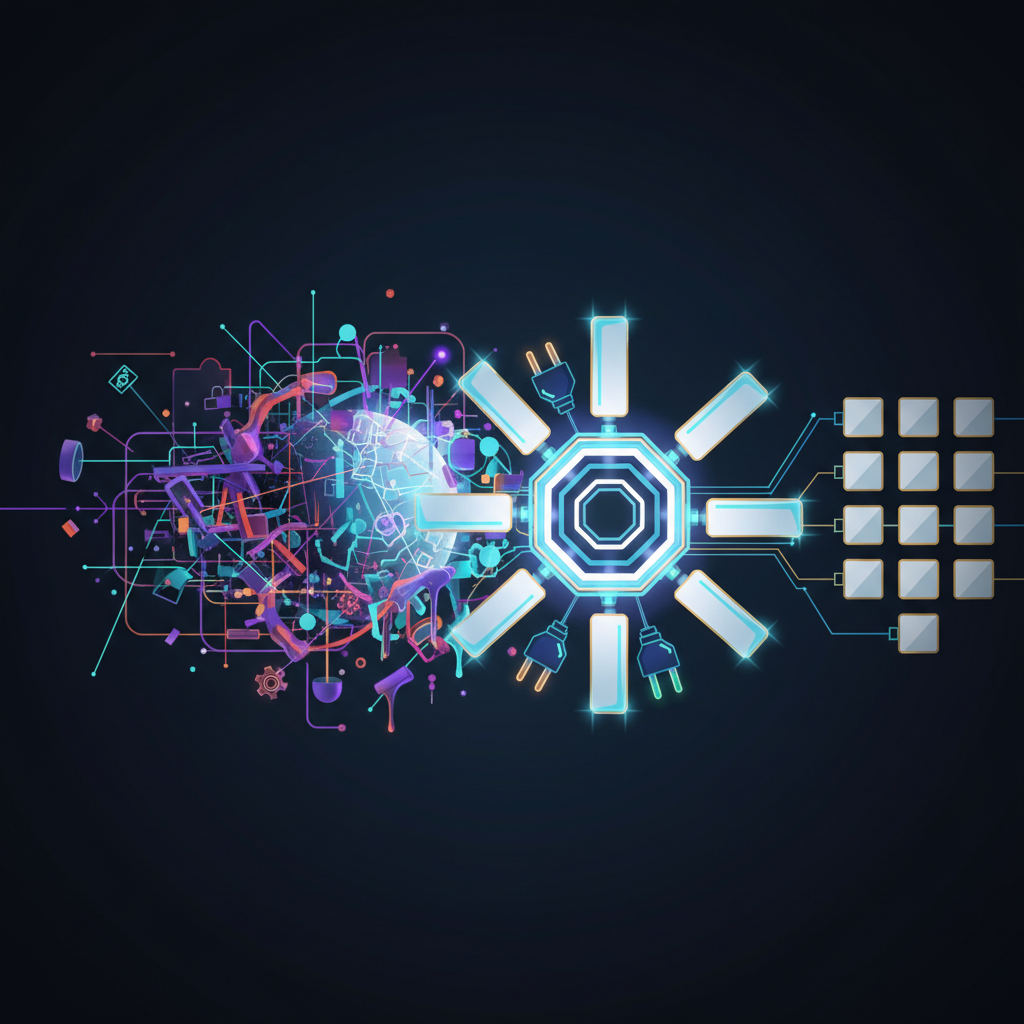

Before the Model Context Protocol (MCP), connecting an AI model to external tools was a fragmented, frustrating process. Every provider had its own schema format, every integration required custom middleware, and switching models meant rewriting everything.

MCP changes this fundamentally. It introduces a standardized "plug" architecture where tools are defined once and work everywhere.

The Problem: Pre-MCP Tool Integration

The Babel of API Schemas

Every major AI provider required a completely different way of defining what a "tool" was:

- OpenAI: Required specific JSON schema using their "Function Calling" API

- Anthropic (older Claude): Required XML tags or a distinct JSON schema

- Google (Gemini): Used yet another structured JSON format

Switching from GPT-4 to Claude meant manually rewriting all tool definitions.

The Glue Code Problem

Beyond definitions, developers had to write custom middleware that:

- Intercepted the AI's tool request

- Parsed the AI's output

- Executed the actual function

- Formatted the result back into text

- Fed it back into the AI's prompt

This code was fragile, model-specific, and non-portable.

# Pre-MCP: The fragile "Glue" approach (OpenAI-specific)

import json, openai

weather_tool_schema = {

"type": "function",

"function": {

"name": "get_weather",

"description": "Get current weather for a location",

"parameters": {

"type": "object",

"properties": {

"location": {"type": "string", "description": "The city name"}

},

"required": ["location"]

}

}

}

response = openai.chat.completions.create(

model="gpt-4",

messages=[{"role": "user", "content": "Weather in Stockholm?"}],

tools=[weather_tool_schema]

)

# Manually parse and route the tool call

message = response.choices[0].message

if message.tool_calls:

tool_call = message.tool_calls[0]

if tool_call.function.name == "get_weather":

args = json.loads(tool_call.function.arguments)

result = actual_weather_function(args["location"])

# Then format and send ANOTHER API request...The Solution: MCP Server Architecture

With MCP, you create a standalone server. The protocol automatically extracts descriptions and parameters from your code and handles all routing.

# Post-MCP: Clean, universal, portable

from mcp.server.fastmcp import FastMCP

mcp = FastMCP("WeatherServer")

@mcp.tool()

def get_weather(location: str) -> str:

"""Get the current weather for a specific location."""

return actual_weather_function(location)

if __name__ == "__main__":

mcp.run_stdio()No schemas. MCP reads the type hints and docstring automatically. No glue. The MCP client handles all routing. Universal. This server works with any MCP-compatible client - Claude Desktop, Cursor, VS Code, or a custom terminal agent.

Pre-MCP vs. Post-MCP Comparison

| Feature | Before MCP | With MCP |

|---|---|---|

| Tool Definition | Rewritten per LLM provider | Written once, standard protocol |

| Reusability | Trapped inside app codebase | Standalone server, any client can connect |

| Connection | Heavy orchestration frameworks | Universal stdio/SSE transport |

| Switching Models | Rewrite all integrations | Zero changes needed |

Building a Pure CLI Client

The real power emerges when you write your own client. This ~20-line Python script acts as the "AI" - it programmatically starts your server, performs the handshake, and executes tools.

import asyncio

from mcp import ClientSession, StdioServerParameters

from mcp.client.stdio import stdio_client

async def main():

server = StdioServerParameters(command="python", args=["server.py"])

async with stdio_client(server) as (read_stream, write_stream):

async with ClientSession(read_stream, write_stream) as session:

await session.initialize()

tools = await session.list_tools()

print(f"Found tools: {[t.name for t in tools.tools]}")

result = await session.call_tool("get_weather", {"location": "Tokyo"})

print(f"Result: {result.content[0].text}")

if __name__ == "__main__":

asyncio.run(main())Intelligent Approach 1: LLM-Powered Tool Routing

Instead of hard-coding tool calls, insert an LLM between the user and the MCP server. The LLM reads the available tools and decides which to invoke.

import asyncio, json

from openai import AsyncOpenAI

from mcp import ClientSession, StdioServerParameters

from mcp.client.stdio import stdio_client

async def main():

llm = AsyncOpenAI(api_key="YOUR_API_KEY")

server = StdioServerParameters(command="python", args=["server.py"])

async with stdio_client(server) as (read_stream, write_stream):

async with ClientSession(read_stream, write_stream) as session:

await session.initialize()

mcp_tools = await session.list_tools()

# Translate MCP tools into LLM schema format

llm_tools = [{

"type": "function",

"function": {

"name": t.name,

"description": t.description,

"parameters": t.inputSchema

}

} for t in mcp_tools.tools]

user_prompt = input("Ask a question: ")

response = await llm.chat.completions.create(

model="gpt-4o",

messages=[{"role": "user", "content": user_prompt}],

tools=llm_tools

)

decision = response.choices[0].message.tool_calls[0].function

result = await session.call_tool(

decision.name,

json.loads(decision.arguments)

)

print(f"Result: {result.content[0].text}")

if __name__ == "__main__":

asyncio.run(main())The key insight: if you add five new tools to your server tomorrow, you do not change the client at all. It automatically discovers and uses them.

Intelligent Approach 2: Dynamic Model Routing

Why hardcode a single LLM? Build a router that evaluates context and selects the best model dynamically.

import re, psutil

from litellm import acompletion

def select_best_model(prompt: str, required_tools: list) -> str:

"""Evaluate dependencies and return the best model identifier."""

# Privacy: sensitive data stays local

if re.search(r"\d{3}-\d{2}-\d{4}", prompt):

return "ollama/llama3"

# Complexity: heavy tasks get powerful models

if len(required_tools) > 5 or "complex" in prompt.lower():

return "gpt-4o"

# Resources: low battery uses lightweight cloud model

battery = psutil.sensors_battery()

if battery and battery.percent < 20 and not battery.power_plugged:

return "gemini/gemini-2.5-flash"

return "gpt-4o-mini" # Fast defaultUsing LiteLLM as the universal translator, the same OpenAI-format code works across 100+ providers just by changing the model string.

Intelligent Approach 3: Automatic Fallback Chains

Production systems need resilience. LiteLLM provides automatic fallbacks with a single argument:

response = await acompletion(

model=best_model,

messages=messages,

tools=llm_tools,

fallbacks=["gemini/gemini-2.5-flash", "claude-3-haiku-20240307"]

)If OpenAI throws a 502, LiteLLM catches it, remaps your MCP tools into Google's schema, and retries on Gemini - invisibly, in about one second.

The Architecture That Modern Agents Use

This is exactly the blueprint behind autonomous agent systems. Strip away the branding and they are doing what we just built:

- Maintain an LLM connection (the Brain)

- Use MCP to load tool registries (the Hands)

- Run the Tool-Calling Loop to evaluate, execute, and read results

The difference is in autonomy: instead of input(), they listen to APIs; instead of stopping after one answer, they run continuous execution loops; instead of temporary message lists, they use persistent memory.

But at its core? It is just a very elaborate version of the LLM-to-MCP routing loop.

Key Takeaways

The shift from custom glue code to MCP represents one of the most important architectural changes in AI tooling. By standardizing how tools are defined, discovered, and invoked, MCP enables:

- Write once, use everywhere - a single server works across all MCP clients

- Dynamic discovery - clients automatically learn what tools are available

- Model independence - swap LLMs without touching tool code

- Production resilience - automatic fallbacks across providers

- Composability - chain multiple MCP servers for complex workflows